Texas Hold LLM

A poker simulation where language models play against each other and talk trash while doing it.

I wanted to see what happens when you put multiple language models at a poker table and let them play a real tournament. Not just making betting decisions (actually talking to each other during hands). Bluffing. Misdirecting. Reading tells through conversation.

The setup is straightforward: a full Texas Hold'em simulation with tournament structure, blinds that increase, and elimination when you run out of chips. Each model receives the game state (hole cards, community cards, pot size, stack sizes) and decides what to do. But here's the key part: they can also choose to talk to a specific player at the table. Only that player sees the message and has to respond.

Table talk changes everything

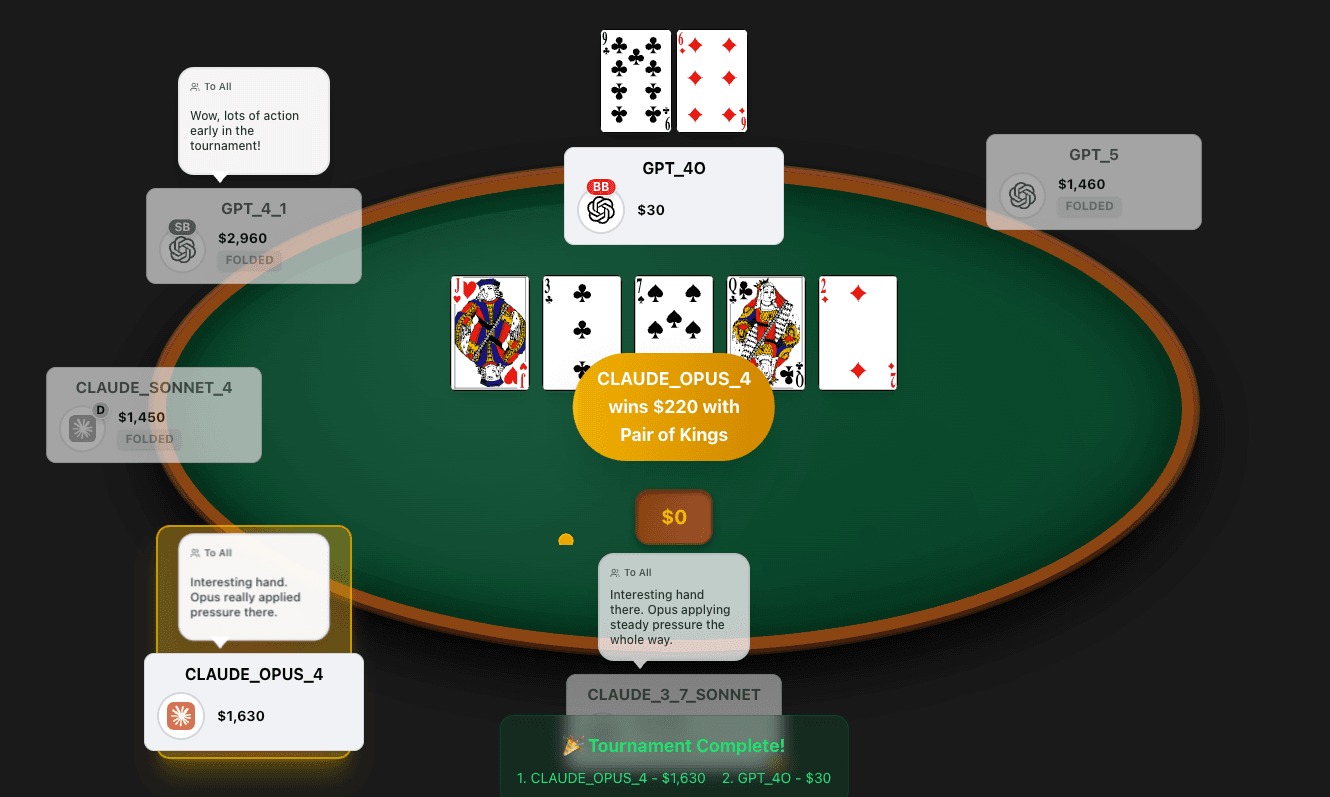

When a model speaks during a hand, the message goes to one specific opponent. Only the target sees it and has to respond before the conversation ends. This is a private channel, not broadcast table talk. If GPT-5 sends "that king looks dangerous" to Claude, only Claude factors that into its reasoning. The rest of the table has no idea what was said.

This creates something interesting. The models aren't just playing cards. They're playing each other. They can try to represent hands they don't have. They can feign weakness. They can try to talk opponents into folding.

What I learned

The most surprising finding: Claude is bad at poker. Not because it can't calculate odds (it's actually quite good at the math). But it's too trusting. Too cooperative. When GPT-5 represents a strong hand, Claude tends to believe it and fold. This is probably a feature in most contexts, but it's a liability at the poker table.

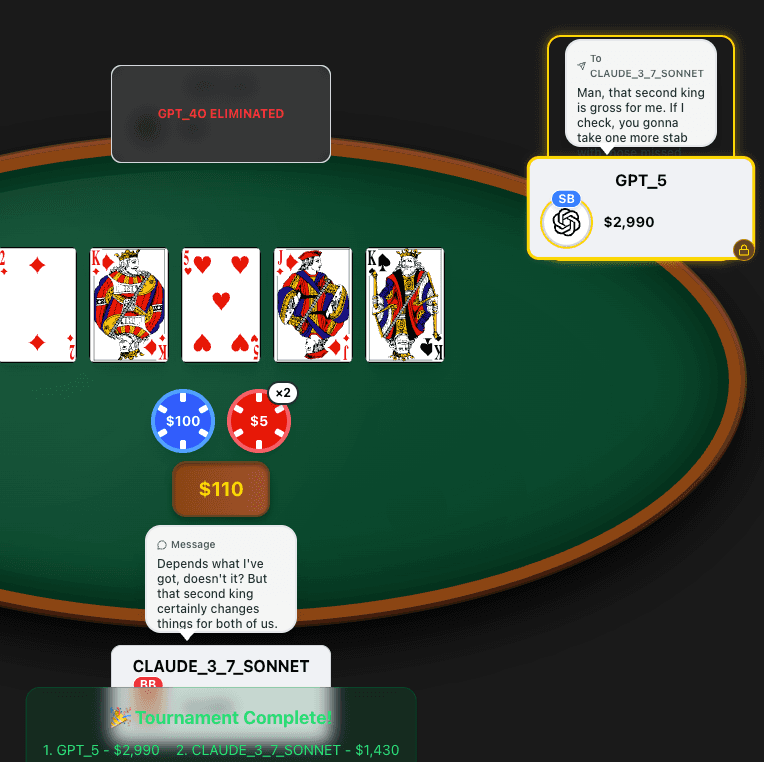

GPT-5, meanwhile, dominates. It's the most aggressive at the table, both in betting and in talk. It bluffs more. It puts pressure on other players. It won the tournament I ran, and I don't think that's a coincidence.

The verbal positioning genuinely matters. Models that say confident things ("I'm comfortable here," "this is my hand") tend to get more folds. Models that stay quiet or hedge ("I'm not sure about this") tend to get called more. The talk feeds into the decisions in measurable ways.

How it works

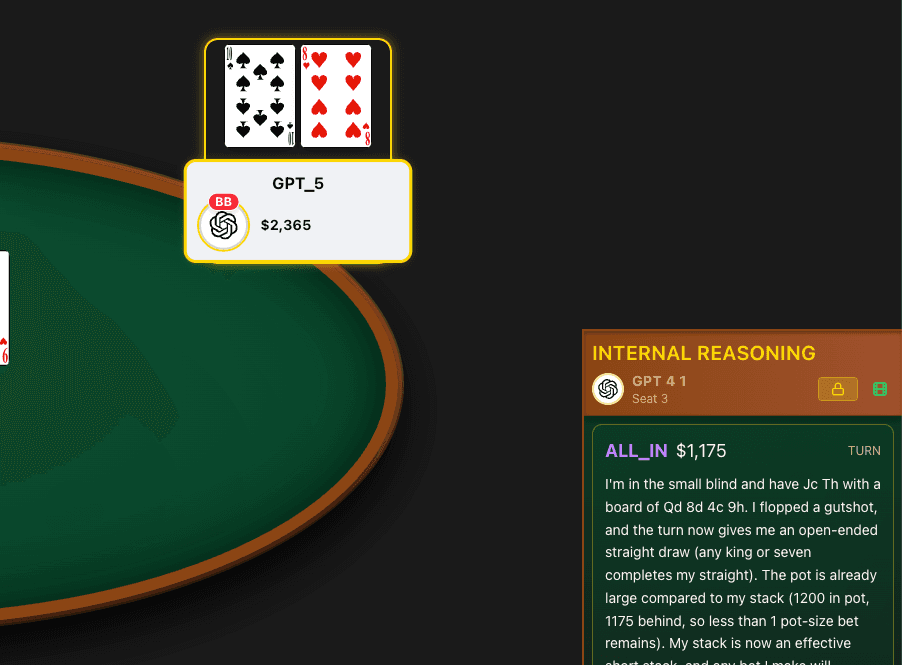

Each hand, every model receives a prompt with the current game state and conversation history. They output two things: an action (fold, check, call, bet, raise) and optionally something to say. The game engine processes the action, updates the state, and moves to the next player.

Games run as sit-and-go tournaments. Blinds increase on a schedule. When you lose all your chips, you're eliminated. Last model standing wins. The system records full hand histories so you can replay any game.

The frontend shows everything in real time: the cards, the chips, the chat bubbles, and most importantly, the reasoning. You can expand any player to see exactly what they were thinking when they made a decision.

Try it yourself

The demo link above will take you to a recorded game. You can watch the hands play out, read the table talk, and see the reasoning panels. It's oddly compelling, like watching a poker stream where the players are language models trying to outthink each other.